There is a peculiar unease many of us recognise but rarely articulate. We are entertained constantly, informed endlessly, stimulated relentlessly — and yet, afterward, something feels thin. The sensation is not boredom. It is not dissatisfaction. It is more like emptiness after fullness, or satiety without nourishment. Everything works. Nothing stays.

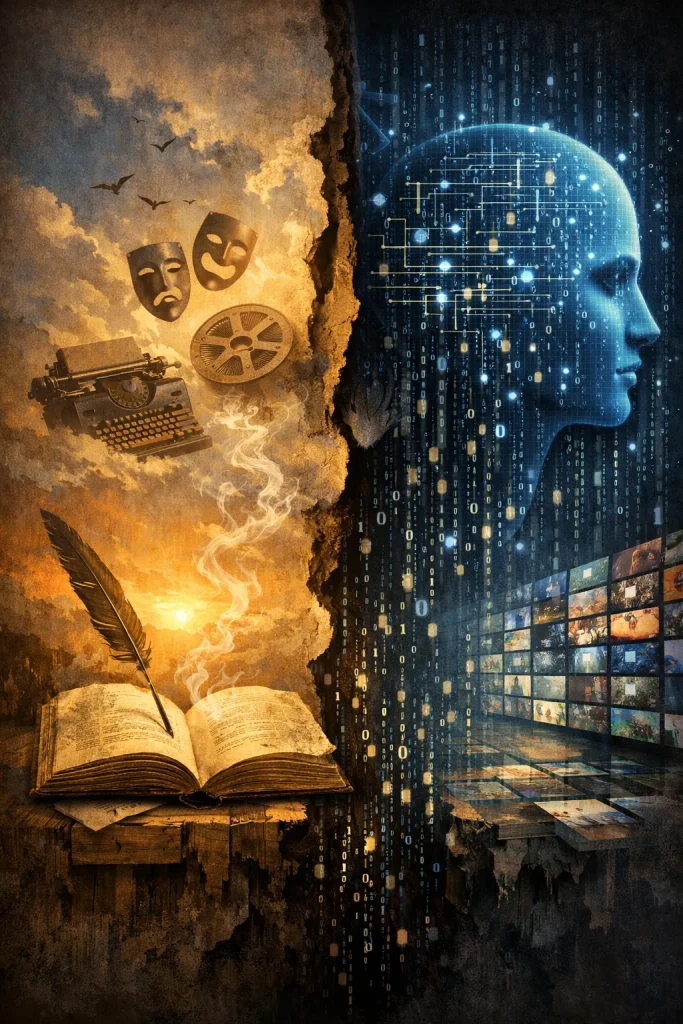

This essay is not an attack on technology. Nor is it nostalgia for some imagined golden age. It is an elegy for something quietly displaced: meaning shaped by comprehension, replaced by experience shaped by correlation.

Big Data did not arrive as a villain. It arrived as a promise. At its heart was humility — the idea that instead of imposing theories on the world, we would learn from experience itself. Patterns would reveal what authority missed. Data would democratise insight. From science to commerce, from language to culture, we would finally listen.

And in many domains, that promise was kept.

Yet something subtle happened along the way. Measurement stopped serving understanding and began replacing it. Optimisation eclipsed comprehension. And the systems we built became extraordinarily good at reproducing the effects of meaning without ever encountering meaning itself.

Big Data, by definition, does not understand. It does not grasp intention, cost, sacrifice, or consequence. It recognises patterns — what follows what, what tends to keep us engaged, what reliably provokes return. This is not a flaw. It is simply what it is. But when such systems are tasked with shaping human experience, the absence of comprehension matters. Deeply. Fundamentally.

Because meaning cannot be reverse-engineered from response alone without comprehension or understanding.

Human creativity has always worked from the inside out. A writer does not begin by asking what will retain attention. They begin with conflict, doubt, obsession, shame, longing — things lived, suffered, or at least deeply imagined. The work may fail. It often does. It may disturb more than delight. But when it succeeds, it leaves residue. It lingers because it reorganises something in us, the readers.

Big Data works from the outside in. It observes behaviour and amplifies what correlates with engagement. It does not ask why something matters. It cannot. It only learns that something works. And so it optimises. That’s it.

The result is content that can be gripping, exhilarating, even moving in the moment — yet strangely difficult to remember. We consume it easily, but we do not live with it. We do not return to it. It leaves no scar, no silence, no aftertaste.

This is not because it is poorly made. Often, it is exquisitely made.

Consider contemporary entertainment, particularly the kind perfected by platforms like Netflix. Stories are paced with astonishing precision. Emotional peaks arrive just in time. Cliffhangers are calibrated. Characters feel familiar immediately, as if we have known them for years. Everything flows.

And that is precisely the problem.

These narratives are not shaped by what they have to say, but by how smoothly they can pass through us. They are engineered to prevent friction — to keep us watching, not to ask anything of us. They move constantly because stillness risks disengagement. They reassure because discomfort risks abandonment. They resolve because ambiguity does not test well.

They do not know what they are about. They only know how to continue.

We sense this difference intuitively. We still read authors long dead not because they are old, but because they are unfinished — because they leave questions unresolved inside us. Their works demand re-entry. They grow as we do. By contrast, many modern hits vanish almost as soon as they are consumed. They were designed to.

The same logic has seeped into news. What was once structured around significance has increasingly become structured around reaction. This is not merely about bias or money; those have always existed. It is about optimisation targets. When attention becomes the metric, events are selected for emotional charge rather than explanatory depth. Complexity gives way to clarity. Ambiguity gives way to outrage or comfort. We are informed constantly, yet understand less.

This is why the term “infotainment” feels so accurate and so bleak. Information has not disappeared; comprehension has. Big Data can identify what provokes attention, but it cannot know what deserves it. I chose not to comment on the wilful manipulation of news here as it falls outside this writing.

Even artificial intelligence, in its most impressive forms, reflects this tension. Systems like ChatGPT can generate fluent, responsive, even insightful-sounding language. They can echo wisdom beautifully. But they do so without having paid any of its costs. They do so witout a semblance of understanding or comprehension. Just correlation. There is no lived silence behind the words, no risk taken, no wound carried forward. But, this does not make them deceptive. It makes them hollow in a very specific way.

The difference matters because meaning is not just a pattern — it is a pattern understood and paid for.

This distinction allows us to understand what we are really losing. It is not creativity per se. Big Data does not eliminate creativity; it outcompetes it economically and culturally. It filters out what cannot be predicted, measured, or safely optimised in advance. True creativity is inconvenient. It often alienates before it attracts. It resists immediate pleasure. It takes time to unfold. It cannot be A/B tested meaningfully because it changes the person who encounters it. It sometimes hurts. It makes life worth living.

Big Data systems cannot wait. They cannot risk. They cannot remember. And so they select against depth without ever intending to.

The tragedy is not malice. It is substitution.

Shallowness and hollowness are not the same thing. Shallowness is surface without depth. Hollowness is form without substance. Big Data excels at filling space — screens, hours, attention — without filling meaning. It offers intensity without integration, stimulation without transformation, mission without vision.

It would be comforting to believe this is confined to entertainment or media. It is not. Fast food industry is another glaring example: Cooking is replaced by behavioural engineering. But this essay does not need to catalogue every domain it touches. To do so would be to fall into the very excess it mourns. It is enough to recognise the pattern.

And yet, something endures.

Despite saturation, people still hunger for work that slows them down, that resists them, that asks for something in return. Such work survives in smaller spaces, quieter forms, often outside algorithmic spotlight. It survives because humans have not stopped needing meaning, even when surrounded by substitutes for it.

Big Data is not evil. It is powerful. It is useful. But it is not wise. It lacks understanding. A civilisation optimised entirely for engagement may one day forget how to understand completely. And what cannot be understood cannot be lived.

This is not a call to abandon technology. It is a call to recognise its limits — and to notice, before it fades completely, what those limits have quietly displaced.

Source: https://medium.com/@mahesh.rajasuriya/the-shallowness-and-hollowness-of-big-data-from-chatgpt-to-netflix-5b9b7530670e